Deep Reinforcement Learning-Based Maintenance Optimization for Infrastructure Networks

Deep reinforcement learning combined with graph convolutional networks for optimal grouping and scheduling of maintenance actions across geographically distributed infrastructure assets.

The Problem

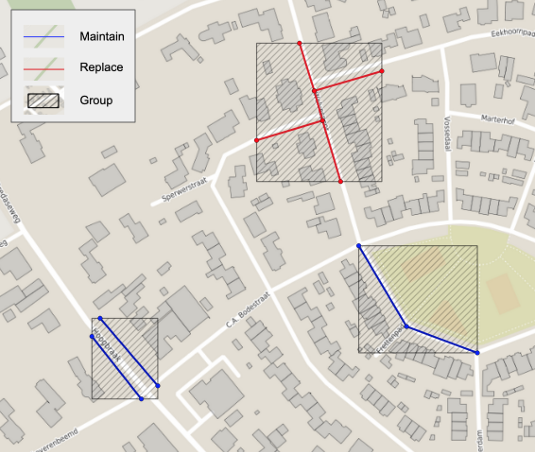

Infrastructure networks such as sewer systems, water mains, and road segments consist of thousands of assets that deteriorate at different rates depending on age, material, and environmental conditions. Maintenance planners face a combinatorial challenge: deciding which assets to maintain or replace, when to intervene, and how to group nearby interventions to reduce setup and mobilization costs. Traditional approaches either react to failures (too late) or apply fixed time-based schedules (too wasteful), and neither accounts for the spatial relationships between assets or the cost savings that come from coordinating nearby work.

Our Approach

We developed a deep reinforcement learning framework that uses Graph Convolutional Networks to learn optimal maintenance policies directly from asset data. The GCN encodes the spatial topology of the asset network, enabling the DRL agent to reason about the condition of neighboring assets when selecting actions. The agent learns to group geographically close maintenance actions together, reducing mobilization and setup costs while maintaining adequate reliability across the network. The framework was evaluated on a real dataset of 942 sewer pipes with historical inspection records.

Key Results

Technologies Used

Managing a network of infrastructure assets?

Let's Talk